The Missing Middle in IoT — From Embedded Device to AWS Cloud

There's a part of most IoT projects that never makes it into the product demo.

The dashboard gets shown. The connected device gets shown. Maybe the analytics layer gets shown too. But the hard part, the part that quietly consumes weeks of engineering time, sits in the middle: getting an embedded device to boot, identify itself, provision securely, connect to the cloud, and accept application updates without someone SSH-ing into it at 11pm.

That middle layer is what this project was built to address.

Cloud-Native IoT Reference Architecture with Arm SystemReady started life as a master's thesis developed at ACP Engineering. The goal wasn't to build yet another IoT platform. It was to answer a more practical question: how do you make embedded systems engineers and cloud engineers meet in the same architecture without both sides having to reinvent the bridge every time?

The result is now open source: a reference architecture built around AWS, AWS IoT Greengrass, fleet provisioning, and an Arm-based device image designed to get hardware into the cloud with less manual work and less guesswork.

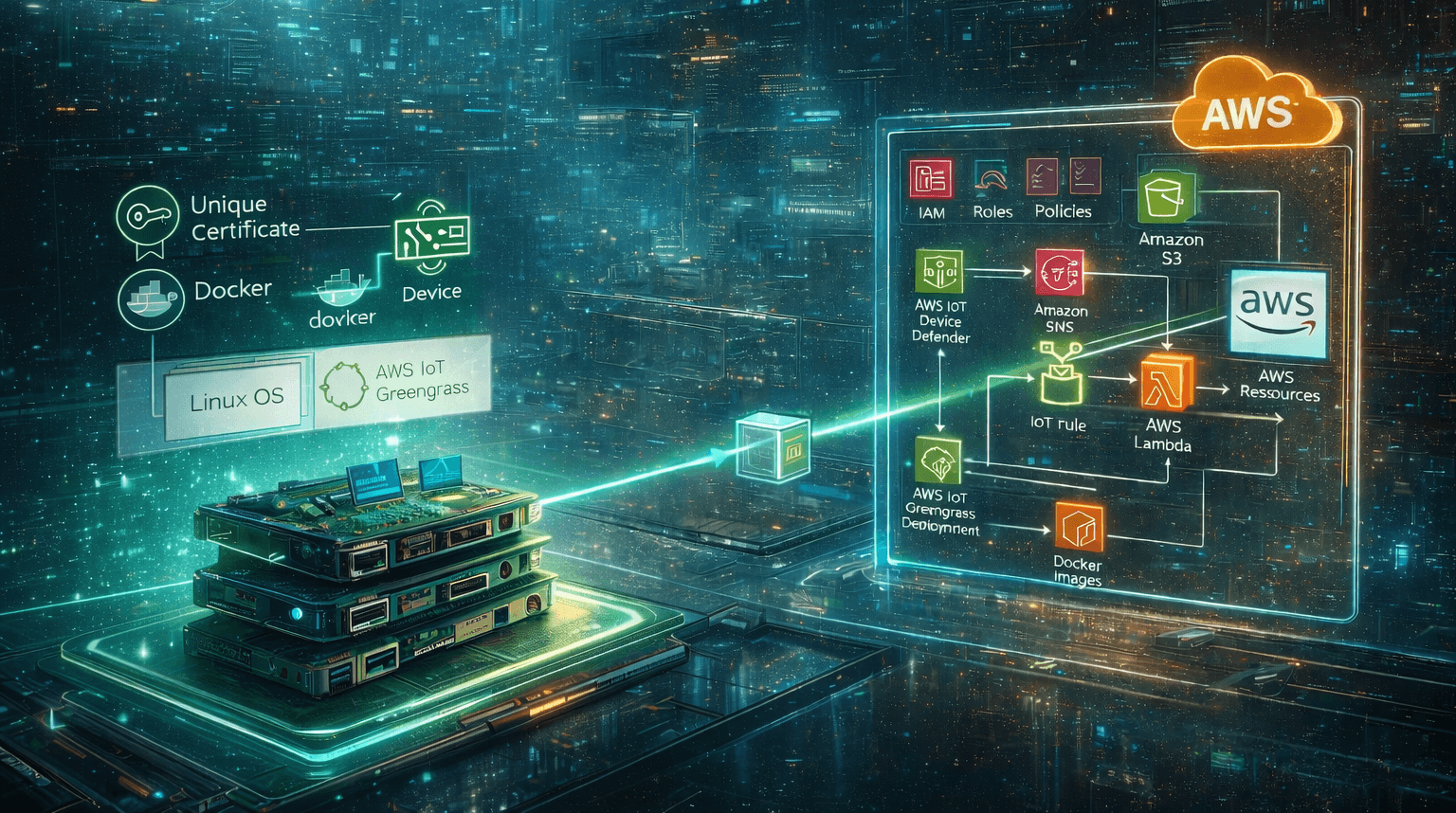

Here's the high-level architecture the project is built around:

The problem: embedded and cloud teams meet too late

This is a familiar pattern.

An embedded team has a board, a Linux image, and an application that works locally. A cloud team has an AWS account, a security model, and a target architecture for ingestion, deployment, and monitoring. Both teams are doing reasonable work. The problem is that integration often happens late, and when it does, every assumption becomes expensive.

Who creates the device identity? How does first-boot provisioning work? Where do certificates come from, and how are they rotated later? How are updates deployed to a fleet instead of a single demo unit? How much of the cloud setup is reproducible, and how much exists only in someone's terminal history?

That is where projects slow down. Not because either side lacks capability, but because the handover between hardware and cloud is usually under-designed.

A good reference architecture helps by making those decisions explicit. It gives teams a starting point that already covers the fundamentals: provisioning, security, deployment, messaging, and repeatability.

What this architecture actually does

The repository breaks the problem into three clean layers.

The AWS control plane. Infrastructure is defined as code using Pulumi in Python. The stack provisions AWS IoT Core, Greengrass-related resources, fleet provisioning templates, IoT policies, a role alias for token exchange, certificate lifecycle Lambdas, Device Defender audit scheduling, SNS notifications, S3 buckets, and the supporting IAM roles around them.

The device bootstrap layer. A custom OS image is assembled with Packer and shell scripts. The current implementation is centred on Raspberry Pi OS Lite. On first boot, the device installs Docker and Greengrass, reads its local identity data, fills out the provisioning config, and connects to AWS using claim credentials so it can receive its own device-specific certificate.

The application layer. Device-side applications are packaged as Docker containers and deployed as Greengrass components. In the demo setup, that includes a simple LED app and a button app to prove bidirectional messaging between cloud and edge, plus a certificate rotation component that handles renewal on the device itself.

That separation matters. It means the project is not just a pile of scripts. It is a repeatable path from cloud provisioning to device image creation to fleet deployment.

The reference architecture was also validated on a real edge setup, combining the device image, Greengrass components, and simple hardware-driven demo applications.

First boot is where the reference architecture proves itself

The most useful part of the project is not the diagram. It's the first-boot flow.

Once the infrastructure is deployed, CI writes runtime configuration into a file that includes things like AWS region, IoT endpoints, role alias, and provisioning template details. That configuration, along with claim credentials and an allowlist, is uploaded to S3 as part of the bootstrap process.

When a device boots for the first time, the provisioning script takes over. It installs what the device needs, reads the serial number, prepares the Greengrass configuration, and starts the fleet provisioning process. The device uses the shared claim certificate only long enough to request a unique certificate of its own.

Before AWS admits it, a pre-provisioning Lambda checks whether that serial number is on the allowlist stored in S3. If it is, the device is registered and brought into the system. If it isn't, the process stops there.

That sounds simple. It isn't. It's exactly the kind of workflow that tends to be handled manually in real projects, which is why it becomes brittle. Here, it's automated and visible.

Security is not an afterthought here

One of the strongest parts of the repository is that it doesn't stop at initial provisioning.

Certificate lifecycle is built into the architecture. AWS IoT Device Defender runs audits, SNS distributes findings, Lambda functions handle the cloud-side steps, and a Greengrass component on the device generates a CSR, receives a new certificate over MQTT, updates the local certificate files, acknowledges the change, and restarts Greengrass.

That closed loop matters because many reference architectures get the first connection working and stop there. Production systems do not get to stop there. Devices stay in the field. Certificates expire. Rotation has to be designed, not postponed.

The same is true of the CI/CD setup. GitHub Actions uses OIDC to assume AWS roles without storing long-lived AWS keys in the repository. Infrastructure deployment, provisioning asset preparation, image creation, app testing, component publication, and deployment all sit in one reproducible chain. That is a better starting point than the usual collection of tribal knowledge and half-documented shell commands.

Where the architecture is strong, and where it is still narrow

The project does a lot well.

It makes the boundary between embedded and cloud engineering much clearer. It packages cloud provisioning, image generation, device onboarding, and application deployment into one coherent flow. It is also unusually honest about the operational parts of IoT: identity, certificate handling, deployment mechanics, and fleet behaviour.

But it is also important to say what it is not.

Despite the Arm SystemReady framing, the practical implementation is still closely tied to Raspberry Pi OS Lite and Raspberry Pi-oriented demo applications. That makes it a solid AWS reference implementation, but not yet a universally portable blueprint across every Arm SystemReady-certified board.

That limitation mirrors what the original thesis work found in practice. Raspberry Pi 4 worked. Extending the same image strategy to other Arm SystemReady-certified devices proved harder than expected, largely because bootloader compatibility and board-specific details still matter. In other words: SystemReady helps, but it does not make hardware diversity disappear.

That's not a failure. It's a useful engineering truth.

Why open-sourcing this matters

The real value of this repository is not that it solves every IoT architecture problem. It's that it turns a messy integration zone into something concrete and inspectable.

If you're an embedded engineer, it shows what the cloud side actually needs from a device boot process. If you're a cloud engineer, it shows what has to exist on the image and on the board for provisioning to work cleanly. And if you're managing both sides, it gives you a starting point that is much closer to production reality than a PowerPoint architecture diagram.

That makes it a good fit for ACP Engineering's broader open-source posture. We are not especially interested in black-box infrastructure. We are more interested in giving teams something real they can read, run, challenge, and adapt.

This repository does that.

It started as a thesis project. It is more useful now as a reference point: a concrete example of how to connect embedded systems and AWS with repeatable provisioning, edge runtime management, and a security model that extends beyond day one.

If your team has ever lost time in the gap between "the board boots" and "the fleet is operational," this is the part worth studying.

Explore the Project

If you want to explore the implementation in detail, the full project is available on GitHub:

Kontakt aufnehmen

Erzählen Sie uns von Ihrem Projekt – wir melden uns innerhalb von 24 Stunden.

- Erzählen Sie uns von Ihrer Herausforderung

- Erhalten Sie einen massgeschneiderten Architekturvorschlag

- Starten Sie mit professioneller Unterstützung

ist jetzt ACP Engineering. Gleiches Team, gleiche Expertise.

ist jetzt ACP Engineering. Gleiches Team, gleiche Expertise.